Prior to the mid-18th century, it was tough to be a sailor — you couldn’t set out for a specific destination and have any real hope of finding it quickly if the trip required east-west travel.

At the time, sailors had no reliable method for measuring longitude, the coordinates that measure how far east and west one is from the international dateline. Longitude’s key was accurate timekeeping, as the English watchmaker John Harrison knew, and clocks just weren’t accurate yet.

To measure distance, measure time

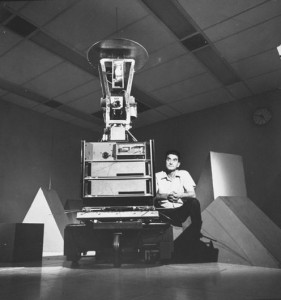

“If you want to measure distances well, you really need an accurate clock,” said Clayton Simien, an NSF-funded physicist at the University of Alabama-Birmingham. His current research on cutting-edge atomic clocks was inspired, while he was an undergraduate, by Dava Sobel’s book “Longitude: The True Story of a Lone Genius Who Solved the Greatest Scientific Problem of His Time” (Walker & Co., 2001).

By the 1700s, sailors had figured out they could measure latitude by studying the sun and its location at various times of the day, so north-south travel was not so problematic. However, the place where longitude equals zero, known as the International Date Line, does not have a basis in nature. As evidenced by several relocations of the prime meridian, located in Greenwich, England since 1884, its placement is arbitrary. After all, who’s to say whose daybreak starts the Earth’s next rotation? [Atomic Clock Is So Precise It Won’t Lose a Second for 15 Billion Years ]

“How you define time is pretty much arbitrary in the sense that in the past we defined a year by using how long it takes the earth to rotate around the sun,” Simien said. “So, basically, any periodic, consistent motion can be the basis for a clock. I used to joke with my relatives that I can say that time is how long it takes me to walk up and down five flights of stairs, while eating a bag of Doritos. But that wouldn’t be a good definition of time. Some days I might be tired, so I move slower. You wouldn’t want to base time on something that can vary so much.”

Sailors figured out that as they traveled east, time moved ahead — the sun set earlier than expected, for example. In fact, based on current parameters for time, for every 15 degrees of longitude a person moves east, the local time moves ahead an hour. That meant longitude could be grossly measured by contrasting the time of day from two places: a ship’s location and its departure port. But, like climbing stairs while eating chips, such measurements also require standards, which for those sailors meant building a clock from materials that didn’t rust and didn’t swell or contract with heat and cold, preserving a reference for the time “back home.”

Harrison, that English watchmaker, put together a clock of wooden wheels — replacing the prior state-of-the-art, a pendulum, with something called a grasshopper escapement, which on its first voyage in 1736 helped identify a 60-mile course divergence for his ship. As a result, he won the Longitude Prize for building the first compact marine chronometer.

The quest to improve timekeeping continues today, as scientists look at new materials that are even more rugged and precise, eliminating variables that might distort accurate timekeeping.

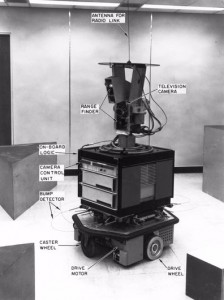

Atomic clocks in GPS satellites work with ground-based clocks so that positioning signals are synchronized as much as possible. Atmospheric distortions present challenges that can limit signal accuracy beyond the most precise atomic clock’s scope. So, while the U.S. Air Force operates the more than 30 GPS satellites in orbit, several government agencies, including NSF, the U.S. National Institute of Standards and Technology, the U.S. Department of Defense, and the U.S. Navy are invested in atomic clock research and technology.

But today’s research isn’t just about building a more accurate timepiece. It’s about foundational science that has other ramifications.

One second equals one ‘Mississippi’ or ~9 billion atom oscillations

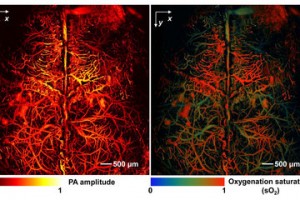

Atomic clocks precisely measure the ticks of atoms, the back and forth transition between two different atomic states. The atoms, commonly cesium, can transfer from the ground state to an excited state, but only if the frequency is just right. The trick to this process is finding just the right frequency to move directly between the two states and overcoming errors, such as Doppler shifts, that distort rhythm.

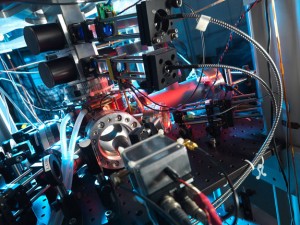

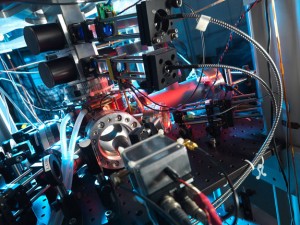

Today’s most accurate atomic clocks use laser-beam photons to “cool” atoms to low temperatures, to within a millionth of a degree of absolute zero. This reduces Doppler shifts and provides a long time to observe the atoms, which improves an atomic clock’s precision.

Laser technology has helped to better control the atoms, such as with optical lattices that can layer atoms into “pancakes” or egg-carton-like structures, immobilize them and helping eliminate Doppler shifts altogether. [Coming Soon: An Atomic Clock That Can Fit in Your Pocket ]

The official “rhythm” associated with the energy difference between the ground state and excited state of those cesium atoms, better known as the atomic transition frequency, yields something equivalent to the official definition of a second: 9,192,631,770 cycles of the radiation that gets a cesium atom to vibrate between those two energy states.

Future atomic clocks

Today’s atomic clocks mostly still use cesium, so according to NSF-funded physicist Kurt Gibble at Pennsylvania State University, the biggest advance in future atomic clocks will be a switch from measuring atoms vibrating at microwave frequencies to those vibrating at optical frequencies.

Today’s atomic clocks in GPS satellites, cell phone towers, the U.S. Naval Observatory’s master clock, and many other places in the world are microwave frequency clocks. These are the only clocks, at this point, that keep reliable time, Gibble said. Despite promising significantly more accuracy. “Just the higher frequency makes it a lot easier to be more accurate,” he added. “So far, optical standards don’t run for long enough to keep time, but they will soon.”

Gibble has an international reputation for assessing accuracy and improving microwave frequency clocks, including some of the most accurate clocks in the world: the cesium clocks at the United Kingdom’s National Physical Laboratory and the Observatory in Paris in France. He is now exploring new optical clocks that could further improve this field.

Optical frequency clocks actually operate on a significantly higher frequency than the microwave ones, which is why many researchers are exploring their potential with different atoms, including alkaline rare earth elements such as ytterbium, strontium and gadolinium.

Simien, whose research focuses on gadolinium, has studied minimizing or eliminating (if possible) key issues that limit accuracy. And recently, Gibble started work on another promising candidate, cadmium.

“Nowadays, the biggest obstacle, in my opinion, is the black body radiation shift,” Simien said. “The black body radiation shift is a systematic effect. We live in a thermal environment, meaning its temperature fluctuates. Even back in the day, a mechanical clock had pieces that would heat up and expand or cool down and contract. A clock’s accuracy varied with its environment. Today’s system is no longer mechanical and has better technology, but it is still susceptible to a thermal environment’s effects. Gadolinium is predicted to have a significantly reduced black body relationship compared to other elements implemented and being proposed as new frequency standards.”

According to Gibble, optical clocks are so accurate they would lose less than a second in the age of the universe, 13.8 billion years. And while Simien and Gibble agree that optical frequency atomic clock research represents the next generation of atomic clocks, taking accuracy to the next level, they recognize that most people don’t really care if the Big Bang happened 13 billion years ago or 13 billion years ago plus one second.

“It’s important to understand that one more digit of accuracy is not always just fine tuning something that is probably already good enough,” said John Gillaspy, an NSF program director who reviews funding for atomic clock research for the agency’s physics division. “Extremely high accuracy can sometimes mean a qualitative breakthrough which provides the first insight into an entirely new realm of understanding — a revolution in science.”

“Around the middle of the previous century, Willis Lamb measured a tiny frequency shift which led theorists to reformulate physics as we know it (not to mention earning him a Nobel Prize),” Gillaspy elaborated. “At a conference just this week, I heard a scientist discuss his idea to harness the GPS network’s precise timing to hunt for Dark Matter, one of the most outstanding problems in science today. Who knows when the next breakthrough will come, and whether it will be in the first digit or the 10th?

“Unfortunately, most people cannot appreciate why more accuracy matters, as evidenced in a recent blog post aimed at physicists in this field. The commenter wrote: ‘You’ve managed to find the single most depressing scientific endeavor of all time: Spend years of research trying to make an ultra-precise clock more precise. If they succeed, only electrons will notice’….These scientists know that they are, in fact, doing the sort of work that can change the world.”

“Interstellar” and beyond

Atomic clock researchers point to GPS as the most visible application of the basic science they study, but it’s only one way this foundational work holds promise.

Many physicists expect it to provide insight that not only illuminates understanding of fundamental physics and general relativity, but also advances quantum computing, sensor development and other sensitive instrumentation that requires clever design to resist the natural force of gravity, magnetic and electrical fields, temperature and motion.

Financial analysts, too, share concerns about the millions that could be lost in worldwide markets due to ill-synchronized clocks. In fact, on June 30, 2015, at 7:59:59 p.m. EDT, the world adds what is known as a “leap second” to keep solar time within 1 second of atomic time. However, because history has shown that most clocks won’t do it correctly, many major financial markets are planning to shut down for a period of time around this leap second, since it’s happening in the middle of a business day in many parts of the world — there’s concern that millions could be lost in worldwide markets due to ill-synchronized clocks.

“The reason you want better clocks isn’t to get accurate time over a long period down to the second. It’s the importance of being able to measure small time differences,” Gibble said. “The GPS looks at the difference in time for light propagating from several GPS satellites. The thing to remember is that the speed of light is one foot per nanosecond. If you want to know where you are, several GPS satellites send out a signal — a radio broadcast that tells where the satellites are and what time the radio signal left the satellite. Your GPS receiver gets the signals and looks at the time differences of the signals, when they arrive compared to when they said they left.”

Getting a GPS to guide us in deserts, tropical forests, oceans and other areas where roads aren’t around to help as markers along the way, one needs clocks with nanosecondprecision in GPS satellites to keep us from getting lost.

“If you want to know where you are to a couple of feet, you need to have timing to a nanosecond — a billionth of a second, which is 10 to the minus 9 of a second,” added Gibble. “If you want that clock to be good for more than a day, then already you have to be at 10 to the minus 14. If you want the system to go for two weeks or longer, then you need something significantly better than that.”

And then there’s the future to think about.

“Remember the movie, ‘Interstellar’?” Simien asks. “There is someone on a spaceship far away, Matthew McConaughey is on a planet in a strong gravitational field. He experiences reality in terms of hours, but the other individual back on the spacecraft experiences years. That’s general relativity. Atomic clocks can test this kind of fundamental theory and its various applications that make for fascinating science, and as you can see, also expand our lives.”

References:http://www.livescience.com/